Strategic Roadmap: Navigating the Transition to Next-Generation Reasoning Models (2024–2026)

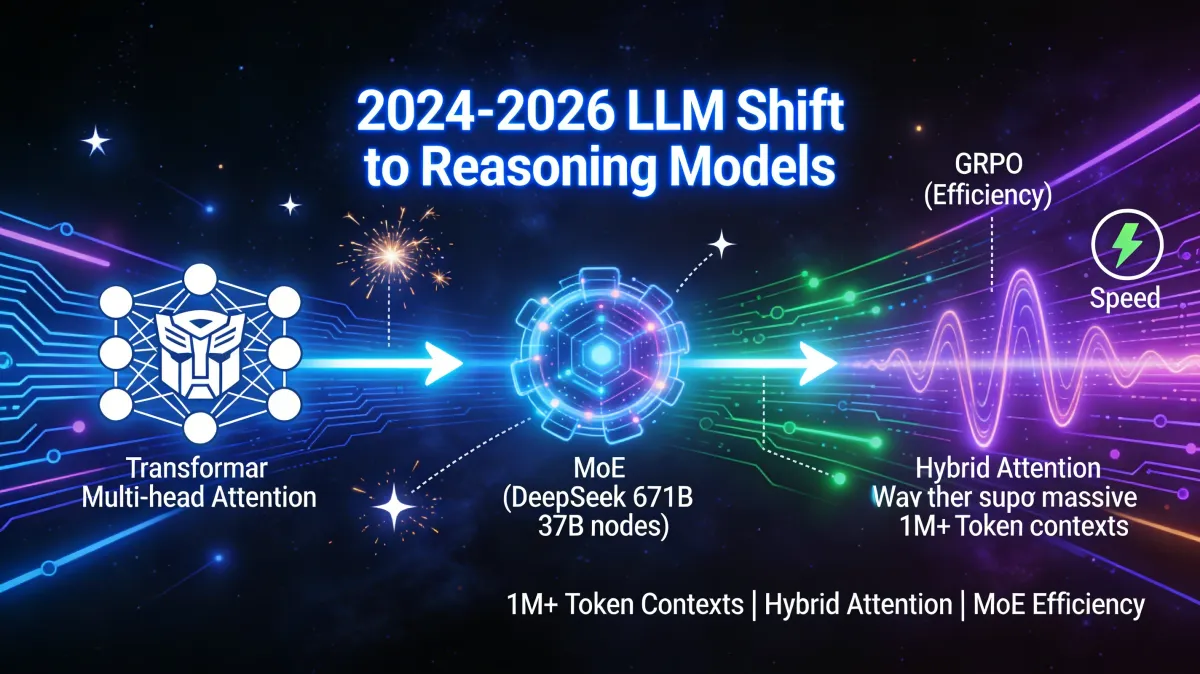

The 2024-2026 shift marks a turning point for LLMs. Transformers yield to reasoning models tackling speed, context, and costs. MoE like DeepSeek 671B (37B active) and hybrid attention enable 1M+ tokens efficiently. GRPO drives verifiable logic; a strategic must for AI architects.

The Architectural Shift: From Standard Transformers to Advanced Reasoning

The period between 2024 and 2026 represents a critical inflection point in the lifecycle of Large Language Models (LLMs). As enterprises move beyond initial experimentation, reliance on standard Transformer architectures is becoming a strategic liability. The shift toward advanced reasoning models is driven by the mandate to transcend the inherent limitations of early generative AI, specifically inference speed, context retention, and operational costs. Moving beyond standard architectures is no longer a technical choice but a strategic imperative to secure competitive advantages in inference efficiency and logical reliability.

| Phase | Methodology | Key Features | Primary Limitations |

|---|---|---|---|

| Phase 1: Traditional Vectorization | TF-IDF / Document-Term-Matrix | Statistical word counting and tokenization. | Lacks context/semantics; ignores word order and relationships. (see the generated image above) |

| Phase 2: Word Embeddings | word2vec | Low-dimensional vector spaces (100–300 dimensions); defines similarity/synonyms. | Struggles with homonyms and requires specific, fixed contexts. |

| Phase 3: Transformer Era | Encoders (BERT): Masked Language Modeling Decoders (GPT): Next Token Prediction | Context-aware self-attention; Decoders established the foundation for generative AI. | Quadratic complexity; massive computational overhead for long sequences. |

Strategic Implications: The evolution from simple word vectors to context-aware Transformers enabled the first wave of enterprise RAG (Retrieval-Augmented Generation) and autonomous agents. By utilizing Decoders for "Next Token Prediction," models began to simulate understanding. However, the foundational "Attention" mechanism used in these architectures contains a critical flaw: its computational difficulty grows quadratically with context length, creating a technical bottleneck that forces a choice between depth of context and cost of compute.

Overcoming the Attention Bottleneck: Evolution of Efficiency

The strategic optimization of the Attention mechanism is the primary battleground for model efficiency. Because computational effort increases quadratically relative to context length, processing enterprise-scale datasets (legal corpora, codebases) becomes exponentially expensive under standard Multi-Head Attention. To mitigate this, the industry has evolved through several architectural variants designed to maintain "long-term memory" while decoupling hardware resource requirements from sequence length.

- Multi-Head Attention (MHA): The original standard. While precise, it demands extreme memory and computational overhead by tracking every relationship across all heads independently.

- Multi-Query Attention (MQA): An early optimization that uses a single key/value pair for all queries. While it increases speed, it results in significant quality trade-offs that often render it unsuitable for complex reasoning.

- Grouped-Query Attention (GQA): A more balanced optimization that uses shared keys/values across groups. It serves as the current standard for balancing speed and accuracy in models like Llama 3.

- Multi-Head Latent Attention (MLA): A sophisticated architecture that utilizes Latent KV Compression. By projecting information into a low-dimensional latent space, it drastically reduces the KV cache size as the primary bottleneck for serving long-context models.

- Hybrid Attention (Qwen3.5/Next & NVIDIA Nemotron-3): The current frontier. Models like Qwen3.5 utilize a 3:1 ratio (three linear layers to one full attention layer). This serves as a mathematical hedge: the linear layers (similar to Mamba-2) provide extreme speed, while the periodic full attention layers prevent the "memory loss" common in pure linear models.

Architectural ROI: These optimizations allow modern models to support context windows of 1M+ tokens (as seen in NVIDIA Nemotron-3). For the enterprise, this enables the processing of entire technical libraries in a single prompt without the linear, budget-breaking increases in hardware costs associated with older architectures.

Scaling Intelligence: Mixture of Experts (MoE) and Hybrid Architectures

As models target higher intelligence tiers, the "Dense" approach (activating every parameter for every query) is economically unsustainable. Sparse architectures, specifically Mixture of Experts (MoE), allow for trillion-parameter intelligence while maintaining the active-inference cost of a mid-sized model.

- DeepSeek-Style MoE: Boasts a total of 671B parameters using 256 experts. By activating only 8 experts per query, the model operates with an effective activation of just 37B parameters.

- Moonshot AI (Kimi K2.5): Currently pushes the frontier further with 1000B (1 Trillion) parameters, utilizing a 384/8/1 SMoE structure (384 experts, 8 active).

- GPT-OSS: Operates on a leaner 32-128/4 structure, demonstrating the flexibility of sparse scaling across different hardware tiers.

- Gated Mechanisms (Qwen Family): Utilizes "Gated DeltaNet" and "Gated Delta Rule" to refine reasoning. These mechanisms act as logical filters, ensuring the model prioritizes relevant computational paths during multi-step problem solving.

- LiquidAI (LFM2.5): Represents a distinct structural shift toward "Liquid" neural networks, focusing on extreme efficiency and continuous-time processing that moves beyond traditional MoE structures.

Strategic Implications: The shift from "Dense" to "Sparse" models directly impacts the bottom line. By reducing the "Cost per Token," MoE architectures allow for the deployment of 1T-parameter intelligence at the inference cost of a 40B-parameter model, fundamentally changing the ROI calculations for large-scale AI deployment.

Hardware Autonomy and Performance Optimization

A critical component of modern AI strategy is "hardware autonomy", reducing dependency on standard NVIDIA/CUDA stacks to ensure supply chain resilience. This is no longer just about cost; it is about geopolitical and operational survival.

| Optimization Type | Implementation | Strategic Impact |

|---|---|---|

| PTX (Low-Level) | DeepSeek / Specialized Kernels | Critical Linkage: DeepSeek utilized PTX optimizations specifically to bypass GPU export restrictions and scarcity. This allowed high performance despite limited hardware access. (see the generated image above) |

| FP8 Weights | DeepSeek, Kimi, Qwen, NVIDIA | Now a standard for H100/Blackwell; saves 50% VRAM and doubles inference speed with minimal precision loss. |

| MXFP4 | OpenAI (Custom Data Type) | A custom, proprietary "frontier" data type (GPT-OSS). While highly optimized, it requires specialized kernels and is not universally hardware-compatible. |

Strategic Advice: Architects must prioritize a "Hardware Agnostic" or "Diversified" compute stack. Organizations adopting models optimized for FP8 or low-level PTX achieve a 2x performance gain on existing hardware. Diversifying away from pure CUDA-dependency mitigates the risks associated with global GPU shortages and export-controlled environments.

The Reasoning Roadmap: 2024–2026 Timeline

The "Reasoning Boom" marks the transition from pattern matching to verifiable logical processing. Models are now being trained not just to be "helpful," but to provide "Questions with Justification".

Chronological Release Schedule

- Late 2024: Paradigm shift initiated by OpenAI o1 preview and DeepSeek R1.

- Early 2025: Mainstream expansion with Claude 3.7 Sonnet, Gemini 2.5 Pro, Grok 3 Beta, and the Qwen family expansion.

- Late 2025 – Early 2026 Outlook:

- KW5-KW6: Kimi K2.5 (1T parameters), Step 3.5 Flash, Qwen3-Coder-Next.

- KW7-KW8: GLM-5, MiniMax 2.5, Nanbeige 4.1, Qwen 3.5, Ling/Ring 2.5, and Liquid LFM2.5 (24B A2B).

Next-Gen Training (Reasoning RL)

- This era is defined by GRPO (Group Relative Policy Optimization) and RLVR. Unlike standard RLHF, these techniques focus on:

- Logical Grounding: Training models to provide justifications for every step.

- Format Correctness: Ensuring strict adherence to complex output structures.

- SFT + Rejection: Utilizing synthetic data and "Cold Starts" to align models before the reasoning reinforcement phase.

Strategic Summary: The speed of this cycle is unprecedented. To avoid technical obsolescence, organizations must align procurement with 6-month release windows. The new standard for enterprise AI is the convergence of Sparse MoE architectures for cost, Hybrid Attention for context, and GRPO-trained reasoning for verifiable accuracy. Leaders must shift investment toward models that demonstrate these efficiencies to maintain a viable AI ROI through 2026.